Sound is the most important medium we use when communicating – unless, of course, we use sign language.

Most of us assume that the auditory signal is enough for others to understand us. Some people increase the volume to make sure they are “heard”. Most of my clients are surprised to learn that sound is not enough to communicate effectively and that other cues affect our perception and understanding.

Whenever we talk with someone whom we can also see, we automatically watch the movements of the other person. We look at the lips, the articulatory movements, the gestures.

When listening comprehension becomes difficult, for example when there is background noise, or if we speak with someone in a language we – and/or he/she – are not that fluent in, we may struggle to understand what is said.

Especially when what we hear and what we see (or seem to see) does not match. We are easily irritated when for example we watch a movie and the audio and the image on the screen are not in sync. (cfr. Albert Costa, The Bilingual Brain: And what it tells us about the Science of Language, 2020)

A very interesting effect called the McGurk Effect, shows what can happen. It is an audiovisual illusion that shows clearly that sounds can be perceived very differently. For example the pronunciation of /ba/ and /va/ can sound exactly the same.

The accurate perception of information can involve the participation of more than one sensory system, in this case, vision with sound, which is called multi-modal perception. Senses, in fact, did not evolve in isolation from each other, but work together to help us perceive our world.When multiple senses are stimulated simultaneously, the brain begins to experience and information rich learning experience! (1:00-1:45 of the following video)

Try to analyze the sounds you see and hear in this video:

What this means for bilingual language acquisition in babies

When babies acquire languages they try to build their sound inventory by connecting visual and auditory cues to discriminate between languages.

Babies between four and six month old are able to differentiate between French and English only by watching videos of people speaking in those languages without sound! (Albert Costa, Chapter 1 Bilingual Cradles)

Costa focuses on babies who are between four and six months old because during that time babies fix their gaze on the mouth of a person.

I can confirm that this ability to differentiate between languages by only focussing on the articulatory movements of the lips can be maintained and fostered throughout life.

I recently did this experiment myself by switching of the volume and only looking at the movements of lips. After a few words I managed to recognize the languages: Italian, German, French and English.

It might be that my personal history has to do with this capacity. When I was 4 years old, I was hearing impaired for almost a year. I suffered from chronic ear infections since birth, but when I was about 4 years old, these infections became very severe. My ear drums bursted about 23 times during those years, causing regular hearing impairment. My parents were not aware about the severity of my hearing impairment because I automatically learned to read their lips. I remember hearing sounds – like when we swim under water – but could only understand what people were saying when I could see their lips movements.

I acquired German and Italian during those years and this impairment did not affect my languages in any way. In fact, my parents and the medical doctors were surprised and shocked when they realized how little I was actually hearing. Fortunately, at 5 years a simple tonsillectomy made these ear infections decrease and eventually stop, and I could finally hear without any impairment. People would even say that my hearing was intensified. At school I could understand what people would whisper and I heard sounds others couldn’t hear – my mother used to say that I would hear the sound of bats (which wasn’t really true).

What this means for language acquisition and learning

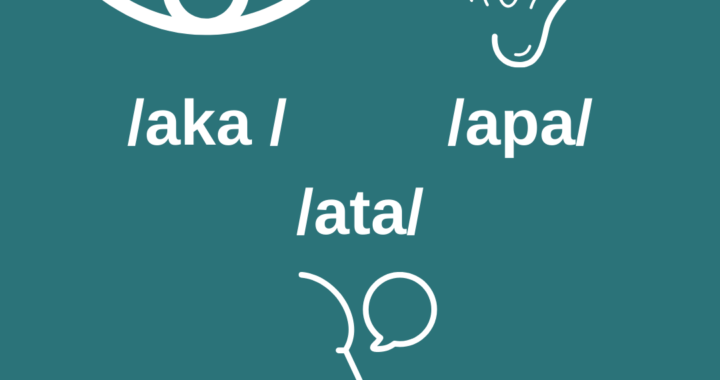

When we learn a new language, but also when babies acquire languages, we need to learn to distinguish sounds that are present in that language: phonemes.

Phonemes are the smallest units that differentiate words. In English, bat, cat, mat, fat only differ by one phoneme (/b/-/k/-/m/-/f/) that are contrastive, i.e. the alternation of these phonemes results in different words, with different meanings. When we struggle with acquiring contrastive phonemes, we make mistakes.

Children whose first language is Chinese, will struggle with hearing the difference of sound in l and r – unless they are exposed to a language where these are two distinctive phonemes, like in English (rack, lack), German (Latte, Ratte), Italian (lutto, rutto) etc. Studies show that babies who are exposed to contrastive phonemes will be able to differentiate between the language, but apparently, when not exposed to these languages before they reach 12 months of age, they won’t be able to :

after just twelve months of exposure to a language in which the contrast between the two sounds in question was not relevant, the ability to differentiate those sounds was lost (or at least significantly reduced). This shows that the passage of time is critical in terms of our ability to distinguish sounds (Costa, p.18)

This loss of sensitivity seems to be accompanied by an increase of sensitivity to detect subtle differences between the phonemes of the language to which the baby is exposed.

I personally doubt that this sensibility will be completely lost. I rather assume that the focus simply shifts to the most important languages for the child at that developmental stage.

In fact, I observe and experience that even later in life we are still able to distinguish between contrastive sounds in other languages. What I would agree with is that it will be more difficult to distinguish sounds that are very different from those we have in our inventory, but it is not impossible. The approach to distinguishing these sounds may not be as intuitive and natural as in babies, but the same way we can learn new languages also later in life, we can learn about the contrastive phonemes of that language. Costa mentions that the loss of this capacity explains why non-babies or everyone who learns another language beyond childhood would have an accent, but there are enough people in the world who learned languages later in life and had very little or no accent, and who would not make the expected “mistakes” that one would expect.

I do agree though that it requires training to hear the difference of pronunciation and intonation of sounds in tonal languages if ones languages in are all non-tonal languages, the same way an adult Chinese native speaker would struggle with differentiating between /l/ and /r/.

What is your experience with acquiring and learning sounds in a new language?

Have you experienced the McGurk Effect?

Please share in the comments here below.

I did experience the McGurk Effect when listening to the samples. I found the study very interesting.

Thank you, Nathan, for sharing your experience. It is quite impressive, right?

Good read. This article led me to think about the roles of bottom-up processing and top-down processing in listening comprehension, particularly in either L2 or L3. I read somewhere, once, that many L2 learners have a tendency to focus on developing their bottom-up processing skills during listening practice, more than they focus on developing their top-down listening skills. Their bottom-up processing skills during listening practice ultimately far surpass their top-down processing skills, leading to a comprehension that is, overall, unfavorably incomplete. Is this true?

The reason why I ask this question is that, as an L2 (technically L3) learner myself, I sometimes encounter a piece of pure, short-length audio, where the audio’s representation of a specific phoneme within the audio, leads a group of human listeners (or, a group of L2 learners) to disagree on what phoneme was represented by that part of the audio (the Mandela effect). Now, assuming this audio came from a video whose duration far surpassed the length of the audio, if I were to watch the video in its entirety, and have the textual title of the video, and perhaps a short textual introduction of the video, provided to me beforehand, then the role of top-down processing in listening comprehension becomes much more apparent. Ultimately, I am trying to see if improving my top-down processing skills can resolve the gaps in my understanding that are caused by inevitable auditory illusions. One natural question that arises to me is how typical it is for L2 learners to neglect training their top-down processing skills, in favor of training their bottom-up processing skills (I suspect I have been one of those people for a while now!). Thanks.

Thank you very much for the very important observation about top-down and bottom-up approaches when learning languages. I think a combination of both, and actually a constant switch between them would have the best outcome. Reasons for that are those you mention yourself. When learning a new language we constantly check if there is a sound, a form etc. that matches with what we already know and we link the “new” to the “old” to establish this network of correlations on all levels of language. This can lead to using forms in one language also in the other and cause misunderstandings, like “wie is dat?” (Dutch: Who is that? ) and “Wie geht’s dir?” (German: How are you?) can lead to a-grammatical “Wie* sitzt da?” (German: how* (who) sits there?) in German. When I learned or learn new languages, I combine both: top-down and bottom-up approaches. An only bottom-up approach that ignores or suppresses the other one is, in my opinion, like learning to play a new instrument ignoring the fact that we already can read the notes… With every new language we learn, we can transfer the skills we have already in the other language, to the new one.

I hope that makes sense? Please let me know if I answered your question. Kind regards, Ute